A new AI research firm is emerging from stealth today with an ambitious goal: to research the basics of human intelligence that machines currently lack. Call Generally smartThey plan to do this by turning these fundamentals into a set of tasks to be solved and designing and testing the ability of different systems to learn to solve them in the highly complex 3D worlds their team built.

“We believe that smart computers in general will one day unlock extraordinary possibilities for human creativity and insight,” CEO Kanjun Qiu told TechCrunch in an email interview. “However, current AI models lack many basic elements of human intelligence, which preclude the development of general-purpose AI systems that can be safely deployed… Intelligence work generally aims to understand the fundamentals of human intelligence in order to engineer secure AI systems that can learn And it understands the way humans behave.”

Qiu, the former chief of staff at Dropbox and co-founder of Ember Hardware, which designed laser screens for VR headsets, co-founded Generally Intelligent in 2021 after shutting down her previous startup, Sourceress, a recruitment firm that used artificial intelligence for research. web. (Qiu blamed the changing nature of the sourcing business.) Overall, Intelligent’s second co-founder is Josh Albrecht, who has co-launched a number of companies, including BitBlinder (a privacy-protecting torrent tool) and CloudFab (3D) – a company printing services).

While the founders of Generally Intelligent may not have traditional AI research backgrounds – Qiu has been an algorithm trader for two years – they have been able to secure support from many of the industry’s leading figures. Those contributors to the company’s $20 million seed funding (plus more than $100 million in options) include Tom Brown, former OpenAI’s GPT-3 engineering lead; former OpenAI robotics leader Jonas Schneider; Dropbox founders Drew Houston and Arash Ferdowsi; The Astera Institute.

Chiu said the unusual funding structure reflects the capital-intensive nature of the problems Generally Intelligent is trying to solve.

“Avalon’s ambition to build hundreds or thousands of missions is an intense process – it requires a lot of evaluation and evaluation. Our funding is set up to ensure that we are making progress in addressing the encyclopedia of problems we expect Avalon to become as we continue to build it.” We have a $100 million agreement in place. – These funds are guaranteed by a withdrawal setup that allows us to fund the company in the long term. We have created a framework that will release additional funding from this withdrawal, but we will not reveal this funding framework because it is closer to revealing the roadmap. “

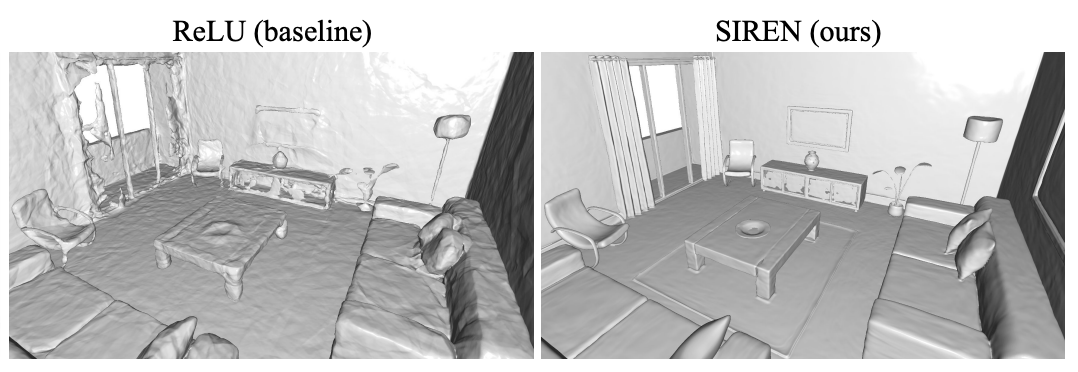

Image credits: Generally smart

What convinced them? Qiu says it’s a general intelligence approach to the problem of AI systems that struggle to learn from others, extrapolate safely, or constantly learn from small amounts of data. Overall, Intelligent has built a simulated research environment where AI agents – the entities that operate on the environment – train by completing the most difficult and complex tasks inspired by animal development and the cognitive milestones of infant development. The goal, Qiu says, is to train a lot of different agents backed by different AI technologies under the hood in order to understand what the different components of each do.

“We think so [agents] It could empower humans across a wide range of fields, including scientific discovery, material design, personal assistants, teachers, and many other applications we don’t yet understand. “Using complex, open research environments to test the performance of agents in a wide range of intelligence tests is an approach that is likely to help us identify and fill in the aspects of human intelligence that are missing from machines. [A] The structured set of tests facilitates the development of a real understanding of the work [AI]which is essential to secure systems engineering.”

Currently, general intelligence mainly focuses on studying how agents deal with object obstruction (for example, when an object is visually blocked by another), persistence, and actively understanding what is happening in the scene. Among the more challenging areas the lab is investigating is whether agents can grasp the rules of physics, such as gravity.

Overall, Intelligent’s work evokes previous work from Alphabet’s DeepMind and OpenAI, which sought to study the interactions of AI agents in game-like 3D environments. For example, OpenAI in 2019 Explore it How the legions of agents who control artificial intelligence in a virtual environment can learn increasingly sophisticated ways to hide from and look out for each other. DeepMind, meanwhile, last year trained agents With the ability to succeed in problems and challenges, including hide-and-seek, capturing the flag, and finding things, which they did not encounter during training.

Game-playing agents may not seem like a technical breakthrough, but experts at DeepMind, OpenAI and now generally smart assert that such agents are a step toward a more general, adaptive AI capable of physically-based, human-related behaviors — like AI can run A robot for preparing food or a machine for sorting automatic packages.

“In the same way that you cannot build secure bridges or engineer safe chemicals without understanding the theory and the components that comprise it, it will be difficult to create secure and capable AI systems without a theoretical and practical understanding of how components affect how components affect their overall goal,” said Chiu. General-purpose AI agents with human-like intelligence to solve real-world problems.”

Image credits: Generally smart

In fact, some researchers have done it question Whether efforts so far toward “safe” AI are really effective. For example, in 2019, OpenAI released the Safe Gym, a set of tools designed to develop AI models that respect certain “constraints.” But restrictions as defined by Safety Gym will not prevent, for example, a self-driving car programmed to avoid collisions from driving 2cm from other cars at all times or doing any number of other unsafe things in order to improve the “collision avoidance” restriction.

Regardless of safety-focused systems, a handful of startups are seeking artificial intelligence that can accomplish a wide range of diverse tasks. witty It develops what it describes as “general intelligence that enables humans and computers to work together creatively to solve problems.” Elsewhere, legendary computer programmer John Carmack raised $20 million for his latest project, Ken Technologieswhich seeks to create artificial intelligence systems that can theoretically perform any task that a human can do.

Not every AI researcher sees general-purpose AI as falling within the realm of possibility. Even after launching systems like DeepMind catwhich can perform hundreds of tasks, from playing games to controlling robots, such as superstars miles Founder Yoshua Benjiu, Facebook Vice President and Chief Artificial Intelligence Scientist Yan Be has repeatedly argued that so-called AI is not technically feasible — at least not today.

Will Intelligent in general prove the skeptics wrong? The jury is out. But with a team of about 12 people and a board of directors that includes Neuralink founding team member Tim Hanson, Qiu thinks she has an excellent shot.