- At the OCP Summit 2022, we are announcing Grand Teton, the next generation platform for large-scale AI that will contribute to the OCP community.

- We also share new innovations designed to support data centers as they advance to support new AI technologies:

- A new, more efficient version of Open Rack.

- Air-assisted liquid cooling (AALC) system – design.

- Grand Canyon, the new storage system on hard drives.

- You can view AR/VR mockups of our latest hardware builds at: https://metainfrahardware.com

Enabling openness, the theme of this year’s Open Computing Project (OCP) Global Summit has always been at the heart of Meta’s design philosophy. Open source hardware and software is, and always will be, a pivotal tool for helping the industry solve problems at scale.

Today, some of the biggest challenges facing our industry at large revolve around artificial intelligence. How can we continue to facilitate and operate the models that drive the experiences behind innovative products and services today? And what will it take to enable the AI behind innovative products and services of the future? As we move into the next computing platform, the metaverse, the need for new open innovations to power AI becomes even more apparent.

As a founding member of the OCP community, Meta has always embraced open collaboration. Our history in designing and contributing to next-generation AI systems dates back to 2016, when we first announced them big wall. This work continues today and always evolves as we develop better systems to serve our AI workloads.

de abuse 10 years of building world-class data centers and distributed computing systems, we have come a long way from developing hardware-independent software suite. Our AI and Machine Learning (ML) models are becoming increasingly powerful and complex and need more high-performance infrastructure to match them. deep learning recommendation modelsDLRMs), for example, are on the order of tens of trillions of parameters and can require a zettaflop of computing for training.

At this year’s OCP Summit, we’re sharing our journey as we continue to enhance our data centers to meet the needs of Meta, industry, and AI at scale. From new platforms to train and run AI models, to power and rack innovations to help our data centers handle AI more efficiently, to new developments with PyTorch, our signature machine learning framework – we’re launching open innovations to help solve industry-wide Challenges and advancing artificial intelligence into the future.

Grand Teton: AI platform

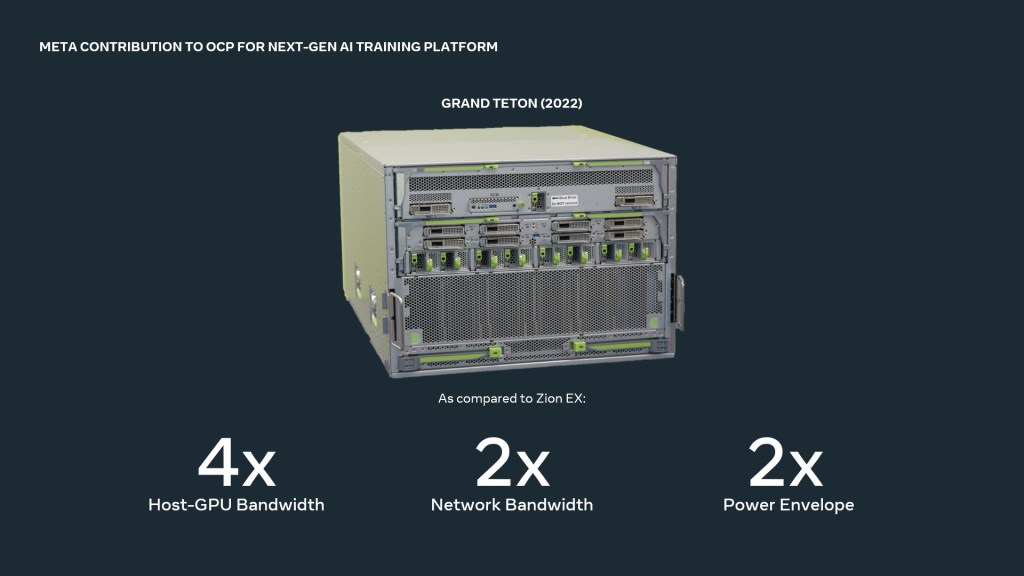

Announcing Grand Teton, our next-generation GPU-based hardware platform, a follow-up to our Zion-EX platform. Grand Teton has multiple performance improvements over its predecessor, Zion, such as 4x host-to-GPU bandwidth, 2x data and network bandwidth, and 2x power envelope. The Grand Teton also has a compact chassis, unlike the Zion-EX, which has several independent subsystems.

As AI models become more complex, so do the workloads associated with them. Grand Teton is designed with more compute capacity to better support memory scale-bound workloads in Meta, such as our open source DLRMs. Grand Teton’s Extended Operational Computing Power Envelope also optimizes it for computing-related workloads, such as content comprehension.

The previous generation Zion platform consisted of three boxes: the CPU header node, the adapter sync system, and the GPU system, and required external cables to connect everything. This Grand Teton integrates into a single chassis with fully integrated power, control, computing, and fabric interfaces to improve overall performance, signal integrity, and thermal performance.

This high level of integration greatly simplifies the deployment of Grand Teton, allowing it to be introduced into fleets of data centers faster with fewer potential points of failure, while providing fast scale with increased reliability.

Shelf and Energy Innovations

OpenRack v3

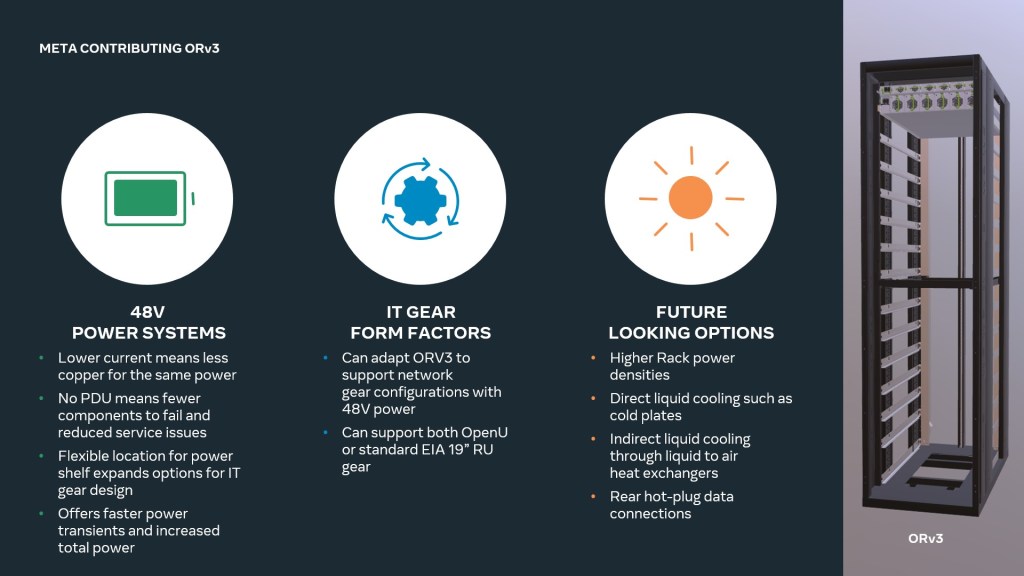

Our latest Open Rack hardware is available to bring a common rack and power architecture to the entire industry. To bridge the gap between current and future data center needs, Open Rack v3 (ORV3) is designed with flexibility in mind, with a framework and power infrastructure capable of supporting a wide range of use cases – including support for Grand Teton.

The ORV3 power rack is not installed in the connecting rod. Alternatively, the electric rack is installed anywhere in the rack, allowing for flexible rack configurations. Multiple racks can be installed on a single busbar to support 30kW racks, while the 48VDC output will support the higher power transmission needs of future AI accelerators.

It also features an improved battery backup unit, which increases capacity to four minutes, compared to the previous model’s 90 seconds, and has a power capacity of 15 kW per shelf. Like an electric rack, this backup unit mounts anywhere in the rack for customization and delivers 30 kW when installed as a pair.

Meta has chosen to develop nearly every component of the ORV3 design through OCP from scratch. While ecosystem-led design can lead to a longer design process than that of traditional interior design, the end product is an end-to-end infrastructure solution that can be deployed at scale with improved flexibility, full supplier interoperability, and a diverse supplier ecosystem. .

You can join our efforts at: https://www.opencompute.org/projects/rack-and-power

Machine Learning Cool Trends Vs. Cooling limits

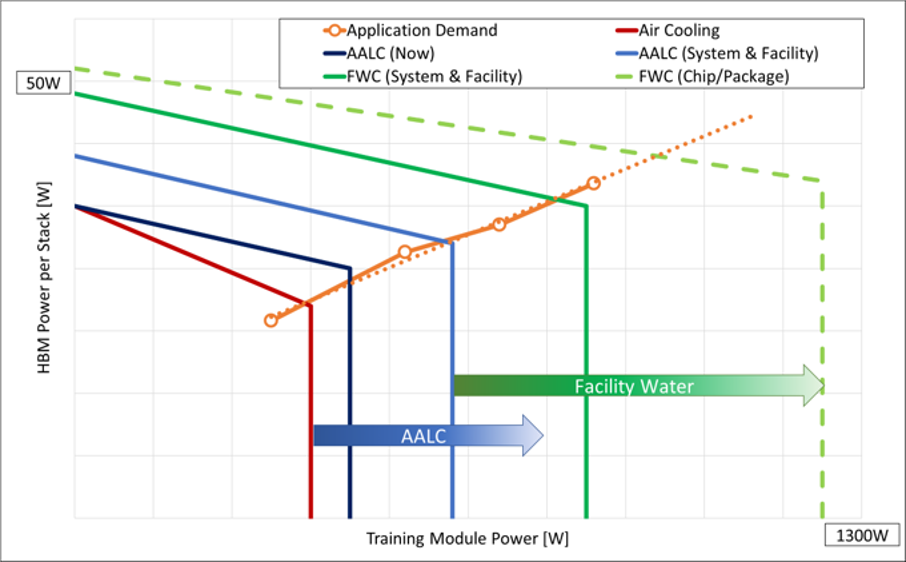

With higher socket power comes increasingly complex thermal management. The ORV3 ecosystem is designed to accommodate several different forms of liquid cooling strategies, including air-assisted liquid cooling and a water cooling facility. The ORV3 ecosystem also includes an optional liquid-cooled interface design, providing drip-free communications between IT equipment and the liquid collector, allowing ease of servicing and installing IT equipment.

In 2020, we formed a new focus group of OCP, the ORV3 Blind Mate Interfaces Group, with other industry experts, suppliers, solution providers, and partners as we develop interface specifications and solutions, such as rack interfaces and structural improvements to support coolant, and quick (liquid) connectors. ), hose manifolds, pipe and tube requirements, blind IT equipment design concepts, and many white papers on best practices.

You may ask yourself, why does Meta focus on all of these areas? The energy trend we’re seeing is increasing, and the need for liquid cooling advances, is forcing us to think differently about all elements of our platform, rack, power, and data center design. The graph below shows projections for HBM growth and training unit power growth over several years, as well as how these trends will require different cooling technologies over time and the limitations associated with these technologies.

You can join our efforts at: https://www.opencompute.org/projects/cooling-environment

Grand Canyon: Next-generation storage for AI infrastructure

Supporting ever-evolving AI models means having the best possible storage solutions to support AI infrastructure. Grand Canyon is Meta’s next generation storage platform, featuring improved hardware security and future major commodity upgrades. The platform is designed to support high-density hard drives without performance degradation and with improved power usage.

Launching the PyTorch Foundation

Since 2016, when we first partnered with the AI research community to create PyTorch, it has grown into one of the leading platforms for AI research and production applications. In September of this year, we announced the next step in PyTorch’s journey to accelerate innovation in AI. PyTorch is moving under the Linux Foundation umbrella as a new, independent foundation PyTorch Foundation.

While Meta will continue to invest in PyTorch, and use it as a core framework for research and production applications in AI, the PyTorch Foundation will serve as responsible host. PyTorch will support you through conferences, trainings, and other initiatives. The Foundation’s mission is to foster and sustain an ecosystem of vendor-neutral projects with PyTorch that will help drive industry-wide adoption of AI tools.

We remain fully committed to PyTorch. We believe this approach is the fastest and best way to build and deploy new systems that will not only meet real-world needs, but also help researchers answer fundamental questions about the nature of intelligence.

The future of artificial intelligence infrastructure

At Meta, we are all subscribed to artificial intelligence. But the future of artificial intelligence will not come from us alone. It will come from collaboration – sharing ideas and technologies through organizations like OCP. We are eager to continue working together to build new tools and technologies to drive the future of AI. We hope you will join us all in our various efforts. Whether it’s developing new approaches to AI today or fundamentally rethinking hardware and software design for the future, we’re excited to see what the sector has in store next.